About

Quick snapshot of who I am and what I build.

AI Engineer with industry and academic experience building production-grade deep learning and Generative AI systems. Specialized in transformer architectures, diffusion models, and Retrieval-Augmented Generation (RAG) pipelines using PyTorch, LangChain, and vector databases. Strong ownership across the full ML lifecycle—from large-scale data preprocessing and model training to evaluation, experiment tracking, and scalable deployment via FastAPI, Docker, MLflow, and AWS. Proven ability to translate research-driven models into high-performance, deployable AI solutions for real-world applications.

Projects

Selected work with measurable outcomes.

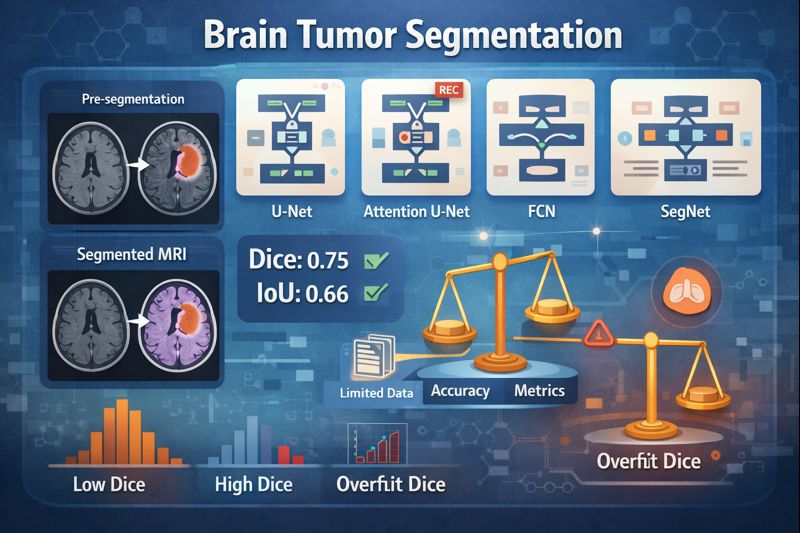

Brain Tumor Segmentation

Evaluated U-Net, Attention U-Net, FCN, and SegNet for MRI segmentation. FCN achieved best generalization (Dice 0.75, IoU 0.66). Analyzed tradeoffs between accuracy, overlap metrics, and overfitting under limited data.

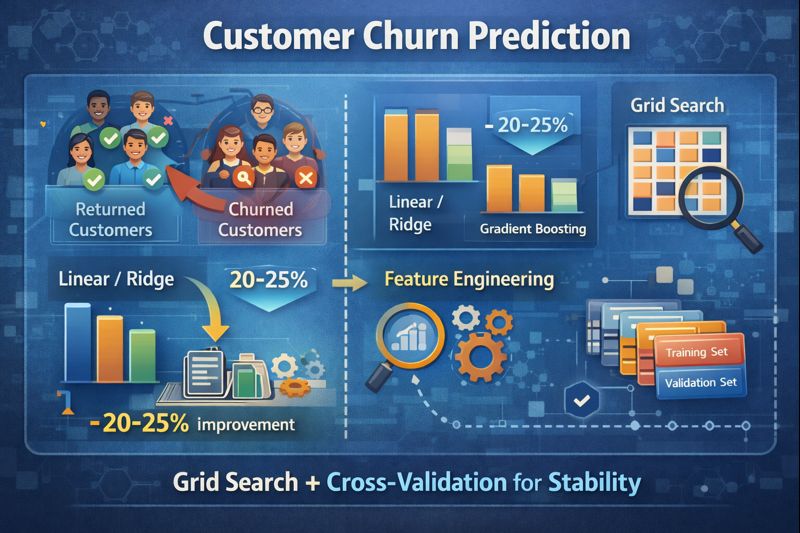

Customer Churn Prediction

Improved churn prediction by 20–25% (MAE/RMSE) moving from Linear/Ridge to Gradient Boosting. Feature engineering delivered an additional 10–15% reduction; used grid search + cross-validation for stability.

Ride Demand Prediction (Time Series)

Forecasting pipeline using temporal + spatial features across 12+ months of data. Reduced RMSE by 12–18% vs seasonal baselines and translated forecasts into operational planning insights.

Replace the “GitHub/Demo/Report” # links with your real URLs.

Experience

Work that proves execution.

AI Engineer — Prevail Infotech INC

Sandy, Utah, USA · July 2024 – Present- Architected and deployed an enterprise Knowledge Intelligence Platform using Retrieval-Augmented Generation (RAG), indexing 50K+ internal documents to enable natural language querying and automated knowledge retrieval across business units.

- Designed and implemented end-to-end Generative AI workflows leveraging Large Language Models (LLMs) for enterprise automation, semantic search, and contextual Q&A systems.

- Built RAG pipelines using LangChain, FAISS/Pinecone vector databases, and HuggingFace embeddings, improving contextual response accuracy by 25–35% through optimized chunking, retrieval tuning, and metadata filtering strategies.

- Developed scalable document ingestion and preprocessing pipelines for unstructured data (PDFs, reports, internal knowledge bases), enabling semantic indexing and structured summarization.

- Reduced hallucination rates by approximately 18% through retrieval re-ranking, prompt engineering refinements, and structured output formatting with validation checks.

- Deployed containerized AI services via FastAPI and Docker on AWS EC2, handling 5K+ requests/day with sub-300ms average retrieval latency and high system reliability.

- Fine-tuned transformer-based models for domain-specific NLP tasks using parameter-efficient tuning techniques and iterative prompt optimization to improve domain alignment and inference performance.

- Implemented monitoring and evaluation frameworks to measure retrieval quality, generation relevance, and system stability using automated metrics and human feedback loops.

Machine Learning Engineer — Next Cloudwave Solutions Pvt Ltd

Hyderabad, India · June 2022 – Dec 2023- Led development of an enterprise retail demand forecasting system processing 1M+ historical transaction records to generate SKU-level weekly predictions for inventory optimization.

- Designed and deployed scalable end-to-end ML pipelines from data ingestion and preprocessing to model serving using Python, scikit-learn, and SQL.

- Built and optimized supervised learning models (classification & regression), improving predictive accuracy by 15–22% through advanced feature engineering and hyperparameter tuning.

- Engineered robust data preprocessing workflows for large structured datasets (1M+ records), improving downstream model stability and consistency.

- Productionized ML models via RESTful APIs (FastAPI), enabling real-time and batch inference integration into client systems.

- Automated model retraining and evaluation workflows, reducing experimentation time by approximately 30% and improving reproducibility.

- Implemented performance monitoring, validation protocols, and A/B testing to continuously enhance inference quality and model reliability in production.

Skills

Tools I use to ship and scale.

Programming

AI / ML

Frameworks

Evaluation

MLOps / Deployment

Cloud / Databases

Certifications

Verified learning and credentials.

- Google Cloud Generative AI Certificate — Google Cloud Skills Boost

- AWS Machine Learning Foundations — AWS & Udacity

- Microsoft Azure AI Fundamentals (AI-900)

Contact

Let’s connect.